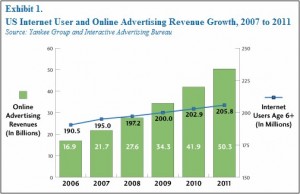

Online media is growing up. All the big media players (News, Fairfax etc.) are currently fighting it out with the new kids on the block, online pure plays (Google, Microsoft, Realestate.com.au etc.). The prize is the rapidly growing pool of online advertising revenue, predicted to pass the US$50 billion mark next year. Historically the provider with the most content has attracted the most consumers, in turn attracting the most customers. Eventually this network effect lead to breakaway market leaders establishing dominance and gradually raising the market barriers of entry. Holding all the content was a licence to print money.

Slowly general search tools like Google and Bing, as well as vertical specific search sites like Zillow, started gaining momentum. They established themselves as “middle men”, generating advertising while helping people more efficiently find the content they were looking for. They were not interested in hosting or contributing content, but rather focused on the delivery of that content. They realised that the front-end distribution is where the money is at, not at the back-end creating content. Google in particular understands this, and the publishers do not. The publishers hate that Google News provides a beautiful user interface to access their content easily and for free, yet despite their threats they do not block Google’s bots because they need a strong online delivery channel and half their traffic comes from search engines.

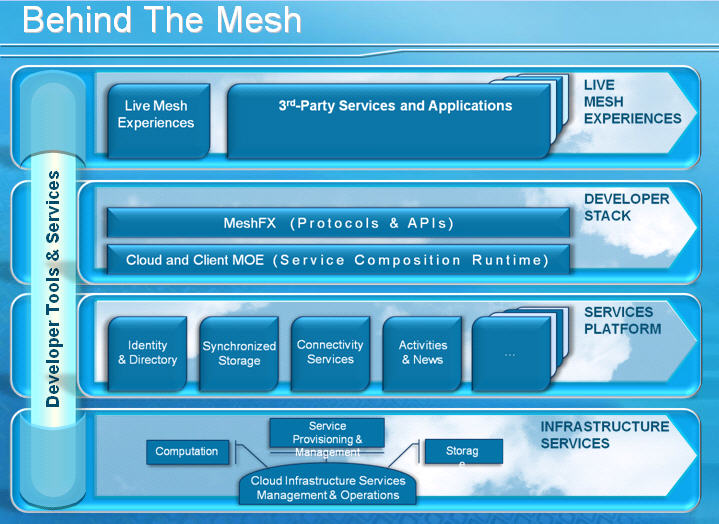

This style of reluctant symbiotic relationship also appears outside news content, it is extending further into real estate and videos to name just a few. Microsoft are attempting to flip the relationship by making Bing Video index Google’s YouTube content and Google Maps is indexing real estate content.

The big media content creators have recognised one thing at least, for the partnership to work each participant has to have a stake in it’s success (or failure). Licencing deals, share stakes and other structures are occurring left, right and centre as the various players align themselves. This “sorting out” period has amusing side effects, like media companies being on both sides of the legal fence. Eventually the flurry of deals will subside and the media companies will realise that YouTube is no different to their old printing press and delivery operation, it is a necessary distribution channel that takes a commission. If your printing press operator decided to make your boring black and white rag and turn it into a glossy high end publication that successfully retailed at twice the price (despite having the same content) then good luck to them, in the end you benefit from a more valuable distribution channel.

For now we are faced with more sabre rattling by the media companies, constant partnership renegotiation’s and declining print revenues. As with any market forces, the digital media market will eventually reach an unsteady equilibrium. Some sort of duopoly with Google/Microsoft as the distribution channels, and the old media companies aligned behind them as the content creators. It is unlikely that the print rivers of gold will be seen in one place again, but sharing these rivers over a wider and more competitive landscape will benefit consumers. Sooner or later content producers will realise that revenue is a balance between consumption price and volume, withholding content only encourages piracy and other forces that undermine their progress to a fair and efficient new distribution channel.